Angler: Helping Machine Translation Practitioners Prioritize Model Improvements

( * Authors contributed equally )

Demo Video

Abstract

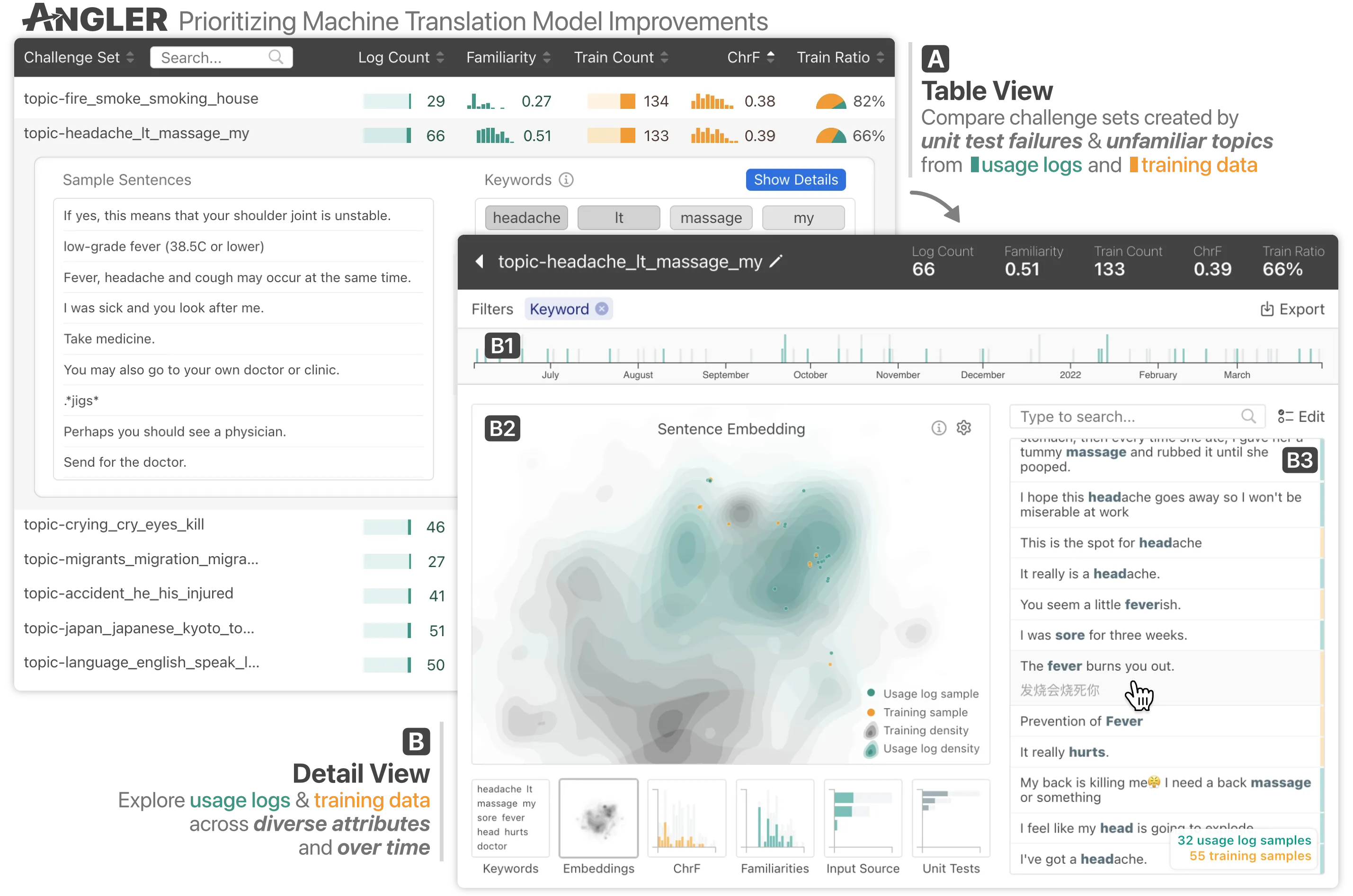

Machine learning (ML) models can fail in unexpected ways in the real world, but not all model failures are equal. With finite time and resources, ML practitioners are forced to prioritize their model debugging and improvement efforts. Through interviews with 13 ML practitioners, we found that they construct small targeted test sets to estimate an error's nature, scope, and impact on users. We built on this insight in a case study with machine translation models, and developed Angler, an interactive visual analytics tool to help practitioners prioritize model improvements. In an observational study with 7 machine translation experts, we used Angler to understand prioritization practices when the input space is infinite, and obtaining reliable signals of model quality is expensive. Our study revealed that participants could form more interesting and user-focused hypotheses for prioritization by analyzing quantitative summary statistics and qualitatively assessing data by reading sentences.

Citation

Angler: Helping Machine Translation Practitioners Prioritize Model Improvements

@inproceedings{robertsonAnglerHelpingMachine2023,

title = {Angler: {{Helping Machine Translation Practitioners Prioritize Model Improvements}}},

booktitle = {{{CHI Conference}} on {{Human Factors}} in {{Computing Systems}}},

author = {Robertson, Samantha and Wang, Zijie J. and Moritz, Dominik and Kery, Mary Beth and Hohman, Fred},

year = {2023},

doi = {10.1145/3544548.3580790},

langid = {english}

}